Onboarding for legacy service rewrite

We have a new engineer joining our team next week, and they're fairly new to software testing. The existing codebase that she works in isn't exactly a beacon of shining perfection, so I don't presume she's ramped up on maintainable testing strategies.

I'm gathering my notes and references here, so I can provide a useful onboarding to the testing practices we're looking to set in place on the new, replacement service for one of our existing legacy services.

For more context, we're replacing a problematic legacy service where the majority of unit tests are actually integration tests that heavily rely on mocks, which had the side effect of making our codebase quite rigid and brittle.

Strangler Pattern

This pattern is a well-known approach to replacing a legacy system. Most of the time, it's a better idea to refactor a legacy system towards a better design, but in some cases that might not be effective. These cases are more rare than you'd expect. If you think a rewrite is the only solution, you're probably wrong. But for us, it's the only solution ;)

When the testing infrastructure is coupled to the implementation of the codebase to the degree that any minor change can result in hundreds of broken tests across dozens of files... Well, consider it an indicator to look at your options.

The Strangler Pattern introduces a second track of operations, and vertical slices of the service are then rebuilt in the second track. Once all the vertical slices are present and proven in the second track, the original track is removed, effectively "strangling out" the technical debt, or problematic design.

We will be leaving the majority of our tests behind. To be confident that a vertical slice has been correctly implemented in the second track, we will use a few techniques, but functional tests are critical.

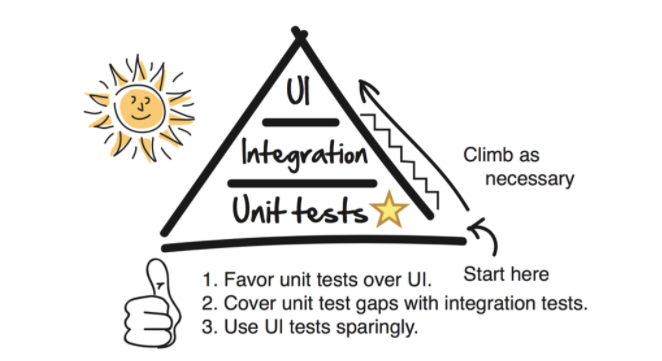

Testing Pyramid

For our purposes, functional (acceptance) tests take the place of UI tests. To run our functional tests, we'll leverage an in-house tool that orchestrates a containerized system, and sets the database state between each functional testcase.

A functional test proves that the entire system is operating as expected for a given function of the system. They are costly to implement and costly to run, so we'll have as few as possible. As a rule of thumb, I like having a single functional test for a happy path run, and then a functional test for each class of exception, as hopefully we are handling all exceptions with a consistent policy.

To temporarily raise confidence even further, a Golden Master technique can be used to compare large quantities of sampled input and output data running in both tracks. We will most likely be doing this in production with real data, running both tracks at the same time, and comparing the results.

Rebuilding

As we build out the replacement system, we'll be duplicating certain modules, decoupling them from their original implementation and inverting dependencies. I'm expecting a lot of "hack and slash" to get us to running code on the second track. Once we have running code passing our functional test, we will trim back and stabilize with more unit tests.

Code Quality

As we bring over vertical slices of behaviour, we continuously run our testing pipeline to ensure our tests stay in the green, but we also can leverage static analysis tools to tell us which modules are most deserving of extra attention.

Techniques and rules of thumb

- Using Strangler Pattern for replacing a legacy system

- Using Tracer Bullet approach to get to running code as quickly as possible

- Leveraging Functional tests and Golden Master tests to ensure correctness.

- Leveraging Static analysis to guide refactoring

- When structuring the application, simplify the testing of business logic by adopting the pattern of Functional Core, Imperative Shell.

- When writing new unit tests, "Test the behaviour, not the implementation"

- When writing unit tests, avoid mocks – they couple tests to implementation

- When writing unit tests, test as little as possible – test public interface, but don't bother testing trivial code

- Separate tests into Fast Tests and Slow Tests

- When writing components, use dependency inversion so that lower level components are passed in as arguments or provided by a dependency injection framework

More generally, refactoring is promoted by writing unit tests that prove behaviour and don't assume application structure.

If it's not obvious, I'm pulling heavily from the following:

- https://alisterbscott.com/kb/testing-pyramids/

- https://testing.googleblog.com/2015/04/just-say-no-to-more-end-to-end-tests.html

- https://martinfowler.com/articles/practical-test-pyramid.html

- https://shopify.engineering/refactoring-legacy-code-strangler-fig-pattern

- https://github.com/analysis-tools-dev/static-analysis

- https://blog.thecodewhisperer.com/permalink/surviving-legacy-code-with-golden-master-and-sampling

"Programmers write unit tests so that their confidence in the operation of the program can become part of the program itself. Customers write functional tests so that their confidence in the operation of the program can become part of the program too.", Kent Beck